import requests

from urllib.parse import urljoin

from bs4 import BeautifulSoup

import pandas as pd

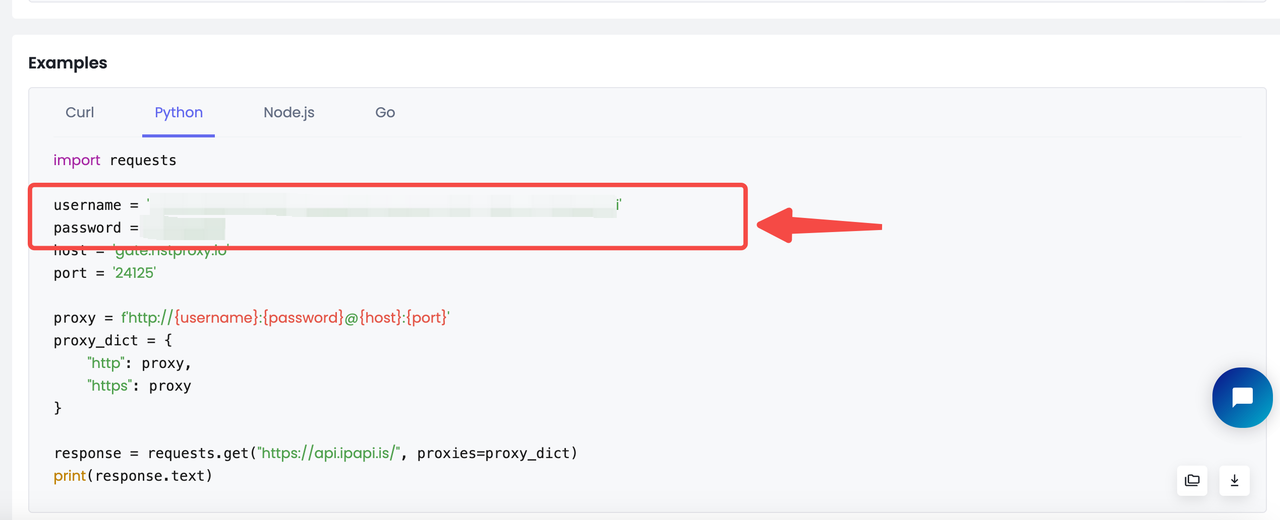

username = 'YOUR Nstproxy Username'

password = 'Your password'

host = 'gate.nstproxy.io'

port = '24125'

proxy = f'http://{username}:{password}@{host}:{port}'

proxies = {

"http": proxy,

"https": proxy

}

custom_headers = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/138.0.0.0 Safari/537.36',

'Accept-Language': 'da, en-gb, en',

'Accept-Encoding': 'gzip, deflate, br',

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.7',

'Referer': 'https://www.google.com/'

}

def parse_listing(listing_url, visited_urls, current_page=1, max_pages=2):

resp = requests.get(

listing_url, headers=custom_headers, proxies=proxies

)

print(resp.status_code)

soup_search = BeautifulSoup(resp.text, 'lxml')

link_elements = soup_search.select(

'[data-cy="title-recipe"] > a.a-link-normal'

)

page_data = []

for link in link_elements:

full_url = urljoin(listing_url, link.attrs.get('href'))

if full_url not in visited_urls:

visited_urls.add(full_url)

print(f'Scraping product from {full_url[:100]}', flush=True)

product_info = get_product_info(full_url)

if product_info:

page_data.append(product_info)

time.sleep(random.uniform(3, 7))

next_page_el = soup_search.select_one('a.s-pagination-next')

if next_page_el and current_page < max_pages:

next_page_url = next_page_el.attrs.get('href')

next_page_url = urljoin(listing_url, next_page_url)

print(

f'Scraping next page: {next_page_url}'

f'(Page {current_page + 1} of {max_pages})',

flush=True

)

page_data += parse_listing(

next_page_url, visited_urls, current_page + 1, max_pages

)

return page_data

def get_product_info(url):

resp = requests.get(url, headers=custom_headers, proxies=proxies)

if resp.status_code != 200:

print(f'Error in getting webpage: {url}')

return None

soup = BeautifulSoup(resp.text, 'lxml')

title_element = soup.select_one('#productTitle')

title = title_element.text.strip() if title_element else None

price_e = soup.select_one('#corePrice_feature_div span.a-offscreen')

price = price_e.text if price_e else None

rating_element = soup.select_one('#acrPopover')

rating_text = rating_element.attrs.get('title') if rating_element else None

rating = rating_text.replace('out of 5 stars', '') if rating_text else None

image_element = soup.select_one('#landingImage')

image = image_element.attrs.get('src') if image_element else None

description_element = soup.select_one(

'#productDescription, #feature-bullets > ul'

)

description = (

description_element.text.strip() if description_element else None

)

return {

'title': title,

'price': price,

'rating': rating,

'image': image,

'description': description,

'url': url

}

def main():

visited_urls = set()

search_url = 'https://www.amazon.com/s?k=apple'

data = parse_listing(search_url, visited_urls)

df = pd.DataFrame(data)

df.to_csv('apple.csv', index=False)

if __name__ == '__main__':

main()

Является ли веб-скрейпинг законным? Этот гид разбирает глобальные законы о скрейпинге, CFAA, GDPR, основные судебные дела, ключевые риски и способы соблюдения закона. Узнайте, почему высоконадежные резидентные и мобильные прокси Nstproxy необходимы для этичного и законно безопасного сбора данных.